Aligning Profit with Purpose: Why Most Purpose Strategies Fail (And How Integrated Governance Fixes It)

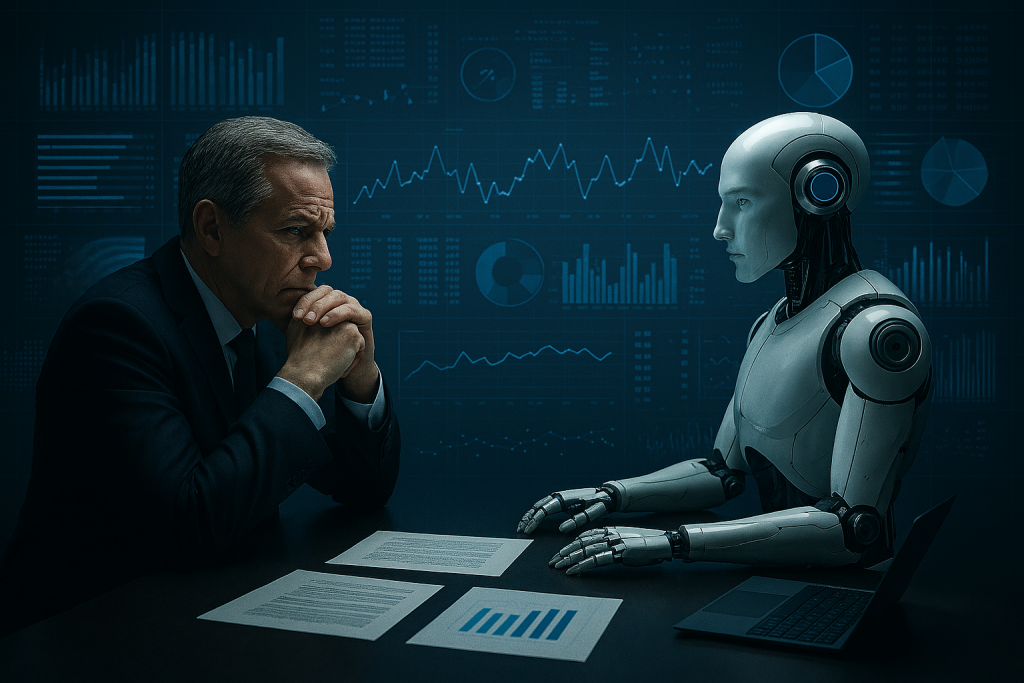

The Purpose Paradox: Strategy Versus Execution According to Chief Executives for Corporate Purpose’s Giving in Numbers (2025 edition), 87% of companies reported having a corporate purpose statement in 2024, yet only 67% had embedded metrics to assess whether business practices aligned with that purpose. The result is a significant execution gap: ambitious purpose declarations collide with supply chain decisions, AI deployment choices, pricing models, and board oversight practices that contradict stated purpose entirely. This gap is not a communication failure. It is a governance failure. The stakes are real. Purpose-aligned companies, i.e. those with metrics linking business practices to stated purpose, delivered a 31% increase in median pre-tax profit between 2023 and 2024, compared to just 3% for companies without such alignment. Yet purpose initiatives fail because organisations treat purpose as marketing narrative rather than governance discipline embedded in decision-making. Why Purpose Matters Now: The Business Imperative Three forces make integrated purpose governance non-negotiable in 2026: Integrated Governance Mandate: EU AI Act Article 14 and DSA Article 27 impose overlapping transparency and human oversight requirements that demand coordinated implementation. Siloed approaches, where data protection policies ignore AI deployment opacity, or sustainability claims lack supply-chain verification, create enforcement gaps across GDPR, sustainability reporting requirements, and AI Act obligations. Integrated governance is regulatory defence, not administrative overhead. Investor Scrutiny: Purpose misalignment is a red flag for governance risk. Investor scrutiny is shifting from stated purpose to execution evidence: boards ‘integrated skills (AI governance, sustainability), evaluation practices, and whether metrics tie purpose to operating decisions. Workforce Expectations: Gen Z and millennial workers prioritise purpose: 89-92% say it’s important to job satisfaction, and 44-45% have left roles due to perceived misalignment, according to Deloitte’s 2025 Gen Z and Millennial Survey. Organisations treating purpose as performative messaging rather than integrated governance face sustained attrition as these cohorts become the workforce majority. The Three Governance Gaps That Kill Purpose Initiatives Gap 1: Why Your Board Can’t Oversee What It Doesn’t Understand Purpose strategy requires board-level strategic thinking, yet most boards lack both the knowledge and governance infrastructure to execute it. According to BSG-INSEAD BOARD ESG Pulse Check (2022), 44% of directors cite insufficient ESG and purpose knowledge as the primary barrier to effective oversight, and 43% do not believe their organisation has the ability to execute its stated purpose goals. Additionally, 70% of directors say they are only moderately, or not at all, effective at increasing oversight on purpose integration into corporate strategy and governance. The result is fragmented accountability. When purpose oversight lands in a sub-committee or is left to the Chief Sustainability Officer while the C-suite focuses on short-term financial metrics, operational decisions default to profit maximisation. Supply chain practices contradict environmental purpose. Hiring practices ignore diversity commitments. AI deployment violates data protection principles you publicly embrace. The governance fix: Purpose must move from compliance-mindset sub-committee work to strategic board-level ownership, with explicit accountability for cross-functional alignment between purpose and operational execution. Gap 2: When Marketing Declares Purpose But Operations Contradicts It Most companies define purpose centrally but execute it in silos. Marketing communicates the purpose statement. HR uses it for recruitment. Finance ignores it. Operations proceeds with established supplier relationships and cost-cutting measures that contradict stated values. Procurement officers receive no guidance on how to evaluate vendors through a purpose lens. Consider one FTSE 100 financial services firm that publicly committed to ethical labour practices while procurement incentives rewarded lowest-cost suppliers, creating invisible modern slavery risk in third-tier supply chains. The purpose statement won awards; the operational reality created regulatory exposure. This fragmentation is what creates the crisis: companies publicly commit to ethical labour practices while supply chains remain opaque. They declare environmental stewardship while operational metrics incentivise waste. They profess diversity commitment while promotion data tells a different story. The governance fix: Embed purpose into operational decision frameworks across all functions simultaneously. Define “aligned with purpose” explicitly for supply chain, procurement, technology deployment, and capital allocation. Measure and report on alignment quarterly. Create accountability at department level, not just corporate level. Gap 3: How Counting Activities Hides Zero Impact While 67% of purpose-aligned companies now measure business practice alignment with purpose (up from 58% in 2020), many still rely on output metrics (activities conducted) rather than outcome metrics (actual impact and behavioural change). This creates performative measurement: companies count volunteer hours but don’t track whether employee retention improved. They report community investments but ignore whether stakeholder trust actually increased. Without rigorous impact measurement, purpose becomes a cost centre that boards question and budget cuts eliminate when times are tight. With proper measurement showing ROI, purpose becomes a strategic asset. The Business Case: Purpose as Competitive Advantage Financial Performance: Purpose-aligned companies delivered 31% median pre-tax profit growth between 2023 and 2024, compared with 3% for peers without such alignment, according to CECP’s Giving in Numbers report. EY’s CEO Imperative Series reinforces the pattern at market level: purpose-driven businesses outperform the market by 5–7% annually. Employee Retention and Engagement: Organisations with clear purpose and aligned operations show 40% higher retention rates. Employees who engage with purpose-driven programmes show a 29% lower attrition rate at companies like Cisco, and RTX found employees engaging in volunteering programmes were three times more likely to stay. Gallup data shows highly engaged teams (enabled by purpose clarity and alignment) deliver 23% higher profitability, 18% greater productivity, and 10% higher customer loyalty. Talent Attraction: 82% of employees believe a company must have clear purpose; generational research confirms this is non-negotiable for competitive talent acquisition. Stakeholder Trust: Companies demonstrating authentic purpose-driven practices report deeper stakeholder trust, stronger community relationships, and resilience during crises – advantages that transcend quarterly earnings. Crafting an Integrated Purpose Strategy: From Declaration to Governance Developing purpose strategy that actually drives execution requires moving beyond traditional declaration approaches to a governance-embedded framework: Step 1: Define Purpose Through Stakeholder Lens Purpose must answer: What systemic challenge is our organisation uniquely positioned to address? Not what sounds good to investors or customers, but where does our core business model